mDecks Music Apps That Use AudioKit

At mDecks Music we develop apps to study and practice music and music theory, mostly for intermediate to advanced musicians.

Our apps need to interact with the user in many ways: from playing simple chords using sampled sounds to complex accompaniments, received and send MIDI data and also listen to audio input from the user and convert into useful musical information.

Without a doubt, using AudioKit allowed us to achieve all these tasks easily and with reliable results.

Here are some of the apps where we used AudioKit:

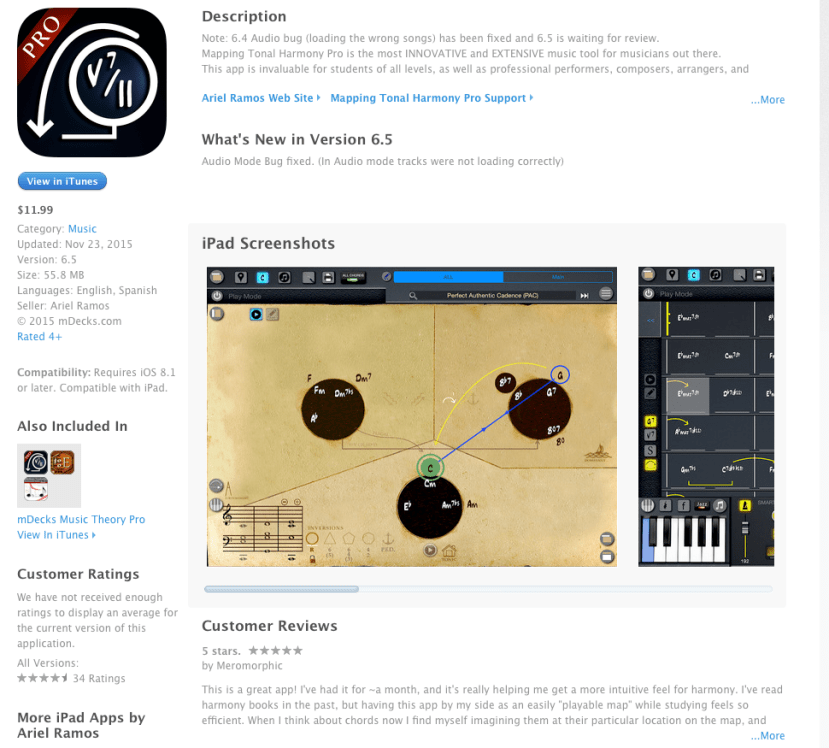

In Mapping Tonal Harmony Pro and Tessitura Pro we play our own sampled sounds, create and play MIDI sequences and the user is able to mix volume levels, reverb amount and change the tempo LIVE while the sequence is playing

Mapping Tonal Harmony Pro is an app with an interactive map that reveals the secrets of harmony. You can study harmony in different styles from Classical to Jazz and Pop, write your own songs and create charts or use it as a ply along to practice improvisation.

Tessitura Pro contains all scales & modes in music. You can study how modes relate to each other, source scales, tensions vs. chord-tones and also practice using different melodic patterns and approach notes.

In See Music, which is a sight reading app that listens to the player and gives instant note-by-note feedback on their performance, we were able to, not only identify pitch, but also to transcribe the entire performance into standard music notation and include a pitch accuracy report on every note in the score.

When we were designing the app the hardest decision to make was what to use to do pitch recognition.

Implementing code that analyzes audio and turns it into pitch and length information involves lots of advanced math, low level access to memory and functions. Soon we realized this was much tougher than expected.

After finding AudioKit, we realized that 90% of the work has already been done. The library is simple enough to incorporate to the project, well documented and works really well.

We were able to solve the entire process by just using the AKFrequencyTracker which returns frequency and amplitude.

Since we wanted to analyze an entire musical phrase we needed something a bit more elaborate than a simple tuner.

In our solution we used a timer to stored all the data received from the tracker

conductor.mic.start()

conductor.tracker.start()

timerito = Timer.scheduledTimer(timeInterval: timeBetweenReads,

target: self,

selector: #selector(self.readAndSaveNotes),

userInfo: nil,

repeats: true)

The readAndSaveNotes function simple stores the data at regular intervals (timeBetweenReads) with 3 different listening modes (readStyle)

@objc func readAndSaveNotes() {

if isListening {

let amplitude:Float = Float(conductor.tracker.amplitude)

let frequency:Float = Float(conductor.tracker.frequency)

if frequency < K.CurrentFreq {

if (!isRecording && amplitude > minAmpStartTrigger) && (readStyle != K.KReadForASetAmountOfTimeStartRightAway) {

isRecording = true

listeningStartTime = NSDate().timeIntervalSinceReferenceDate

}

if isRecording {

switch readStyle {

case K.kReadUntilSilence:

if amplitude > minAmpEndTrigger {

recordNote(f: frequency, a: amplitude)

} else if thereIsData {

stopListening()

}

break

case K.kReadForASetAmountOfTime:

if !isTimeToStop {

recordNote(f: frequency, a: amplitude)

} else {

stopListening(processNotas: true, compareNotas: true)

}

break

case K.KReadForASetAmountOfTimeStartRightAway:

if !isTimeToStop {

recordNote(f: frequency, a: amplitude)

} else {

stopListening(processNotas: true, compareNotas: true)

}

break

case K.kTuning:

reportNote(f: frequency, a: amplitude)

break

default:

break

}

}

}

}

}

We found the biggest challenges were: how to ignore background noise and how to re-interpret frequency base on the instrument’s timbre and how to get the starting and ending time of note with accuracy (since the player is playing a melodic line)

Since See Music is an app for all instruments, it must interpret correctly the notes played by instruments with different timbres.

The weight of the overtones is different on every instrument, so the collected frequencies using the AKFrequencyTracker on a single note is usually a set of related frequencies based on the instrument’s timbre.

We found the best way to achieve this was to parametrize the way we collect the data from the AKFrequencyTracker based on each instrument

Here’s an example of the parameters settings for a default instrument:

var zeroAmplitudThreshold:Float = 0.005

var noiseAmplitudeThreshold:Float = 0.1 // where notes are probably noise

var timeBetweenReads:TimeInterval = 0.025 // how fast to read

var peakThreshold:Float = 0.07 // to consider a new sample a peak

var minimumNoteDurationInIndexes: Int = 3 // how many samples are good for noteDuration

Also to identify the notes, don’t forget to reduce the frequencies to the pitch and octave that makes sense on an instrument.

Here’s a simple class we used to reduced the frequencies and indentify notes

class MDXSemiFrequencyAmplitude : MDXSimpleFrequencyAmplitude {

let kpl:MDXPitchListenerConstants = MDXPitchListenerConstants.sharedPitchListenerConstants

let game:MDXGame = MDXGame.sharedGame

var reducedFrequency:Float = 1.0

func calcReducedFrequency() {

var rF:Float = frequency

let minF:Float = kpl.reducedFreqs[0]

let maxF:Float = kpl.reducedFreqs[11]

while rF > maxF {

rF /= 2.0

}

while rF < minF {

rF *= 2.0

}

reducedFrequency = rF

}

var expectedRedFreq:Float = 0.0

var expectedFreq:Float {

get {

return powf(2, Float(octave)) * expectedRedFreq

}

}

var octave:Int = 0

var midi:Int = 0

func identifyNote() {

let indexAndWas12:(Index:Int, was12:Bool) = kpl.getNoteIndexByReducedFrequency(reducedFrequency)

let index = indexAndWas12.Index

if indexAndWas12.was12 {

reducedFrequency = reducedFrequency / 2

}

octave = Int(log2f(Float(frequency) / reducedFrequency))

expectedRedFreq = Float(kpl.reducedFreqs[index])

midi = 12 + octave * 12 + index – game.curInstrument.transposition

}

init(_ sfa:MDXSimpleFrequencyAmplitude) {

super.init(f: sfa.frequency, a: sfa.amplitude)

tiempo = sfa.tiempo – kpl.listeningStartTime – kpl.timeBetweenReads

calcReducedFrequency()

identifyNote()

}

}

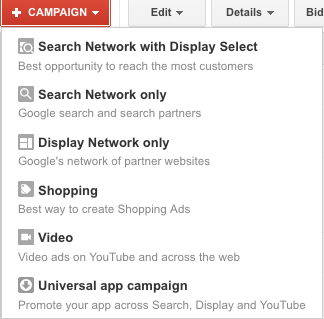

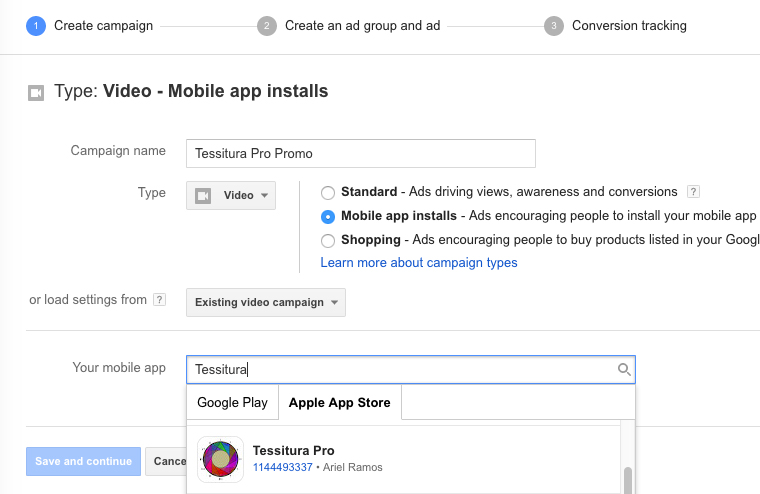

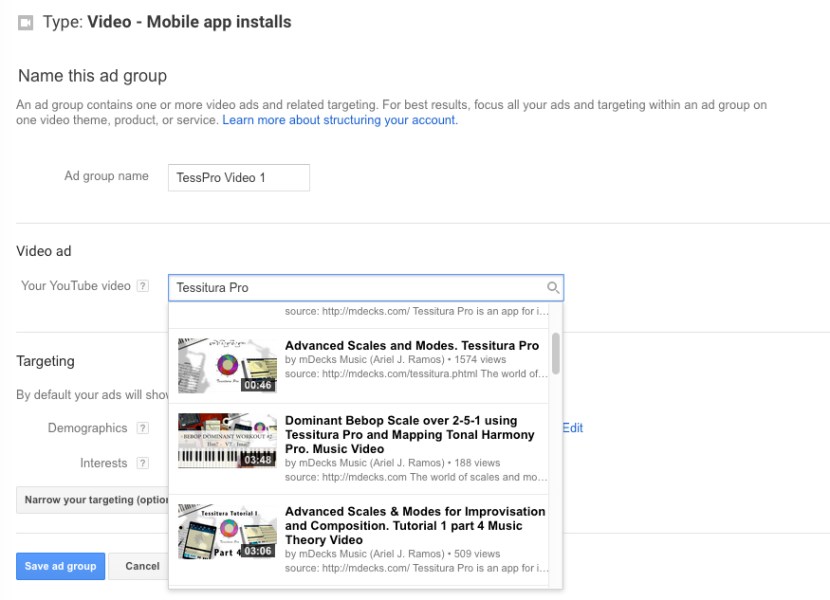

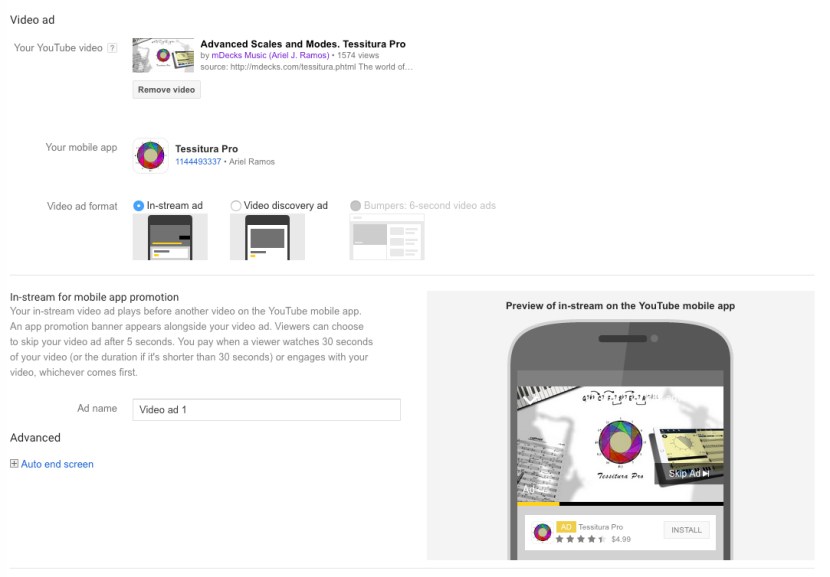

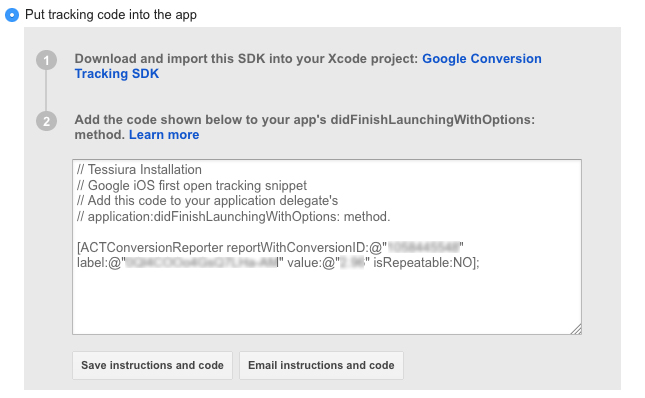

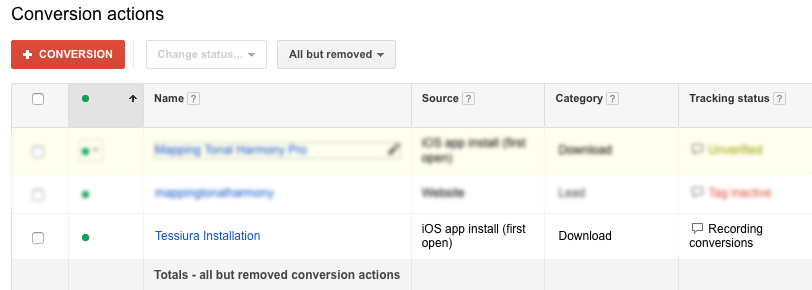

Once you’ve selected your app, new options will appear.

Once you’ve selected your app, new options will appear.

You must be logged in to post a comment.