Category: CoreMotion

Putting iPhone’s CoreMotion Technology to the Test

In our latest music app “Sounds of Christmas by mDecks Music” we wanted to create the illusion of playing a virtual instrument with your iPhone in 3D space. The user would hit “AIR” as if it were Bells and the iPhone will produce sound (notes, chords, etc)

To achieve such effect, we had to user iPhone’s CoreMotion Technology which allows access to all the iPhone’s motion detection system. You can read more about it on my previous post Turning an iPhone into a Musical Instrument

Now, if you have ever worked with MIDI, Samplers and Sequencing software, you know what latency is. For those who do not know: Latency is the amount of time that takes all your software and hardware to turn an “action” (command, process, etc) into sound.

There’s always latency involved in sound production, regardless if you are using computers or not. The speed of sound is not infinite which could be interpreted as already having an embedded amount of latency ( you know how in a tennis match you see the ball being hit and much later you hear the sound.) There’s latency when you play a real Acoustic Piano, since your action of pressing a key needs to be mechanically translated into hitting a string with a hammer. Of course these conversions are so fast that the pianist would not notice them (or at least can cope with)

When using MIDI, software and samplers, we add a full new set of conversions that need to be done before sound is finally produced. Your action in the keyboard is turn into a midi signal, the midi signal is turn into a note request for the sampler which has to ask the computer to access the hard drive (or memory if possible) and read the sound file which is then processed into a music note sent to your sound card which converts that information into sound and output it via your speakers.

An acceptable latency is one that produces the sound fast enough for you to perceive it as being in real time, and does not affect your playing no matter how fast you play. Musicians are tuned to feel that delay much better than the average listener and a delay of 20ms is big enough to be noticeable. An acceptable latency for musicians is below 12ms.

If we want to use the iPhone motion to trigger notes we then have to treat that motion as if it were a key been played on a keyboard, then turn it into MIDI send it to a sampler, etc. It was crucial to create a method that could detect and process motion in a very short amount of time (<1ms) and the question was if iPhone’s CoreMotion was fast enough to make this possible.

After our first test we realized that not only iPhone’s CoreMotion is incredibly fast but it is also so precise that we were able to simulate virtual Bells begin hit by the iPhone at any tempo we wanted.

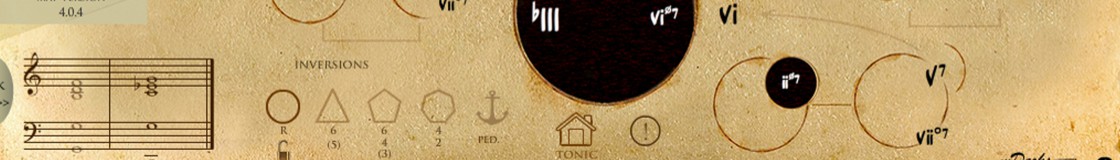

Christmas Sounds by mDecks Music turned also into a rhythmic and sight-reading training tool where the user can perform a song at any tempo and, we decided to include rhythm scores of all songs for music teachers and students since this is such an intuitive way of learning and practicing rhythms

You can print all scores from your iPhone within the app or, you may download the eBook in PDF format with all songs notated as rhythm.

You can print all scores from your iPhone within the app or, you may download the eBook in PDF format with all songs notated as rhythm.

Turning the iPhone into a Musical Instrument

While developing our new Christmas Puzzles by mDecks Music app we did an experiment with the CoreMotion module of the iPhone. CoreMotion lets you detect and track movements of the iPhone in real time.

There are many different approaches to implement apps that can handle iPhone movement. The most frequently used technique is to use a method that detects the iPhone being shake but it is not precise and did not work for us. For a more precise tracking method you need to use CoreMotion.

In our solution, we were able to implement a routine that would detect the iPhone movement when the user pretends to be hitting imaginary bells in front of him/her. Our algorithm ended up being very simple and, the response, really fast and efficient. We managed to create different detection algorithms and included in the app.

We created music files that would play a song using the rhythm played by the user as source. You may print the rhythm score to practice our rhythmic sight reading and/or learn how to read and play rhythms while performing a real song, which we think makes practicing rhythms very intuitive and fun.

We turn our first verision into an All-Christmas app called : Sounds of Christmas by mDecks Music which at the moment is waiting for review on the App Store. ( Sounds of Christmas webpage )

Here are our video demos:

To do this you need a CMMotionManager.

– (CMMotionManager *)motionManager

{

static dispatch_once_t onceToken;

dispatch_once(&onceToken, ^{

motionManager = [[CMMotionManager alloc] init];

});

return motionManager;

}

and then start updating the iphone motion at a specific updateInterval.

– (void)startUpdatingWithFrequency:(int)fv

{

NSTimeInterval delta = 0.005;

NSTimeInterval updateInterval = 0.01 + delta * fv;

if ([motionManager isAccelerometerAvailable] == YES) {

[motionManager setAccelerometerUpdateInterval:updateInterval];

[motionManager startDeviceMotionUpdatesToQueue:[NSOperationQueue mainQueue] withHandler:^(CMDeviceMotion *motion, NSError *error) {

[self CALL-YOUR-ROUTINE-HERE];

}];

}

}

You can get all the updated information by using:

motion.userAcceleration.x; //or y or z

motion.attitude.roll; // or pitch or yaw

Once you’ve obtained this data in real time, it’s up to you to create an algorithm that would interpret the device’s movement. It is crucial to set up a coherent updateInterval. It can’t be too short or too long. You have to find it by trial and error.